In 1971, Intel revolutionized the world by launching the first commercial microprocessor, the ‘4004’. This chip boasted 2,300 transistors in a 16-pin DIL package and consumed less than half a watt from its 15V power rail. It heralded the start of the modern era of mass computing, although at $60, it wasn’t cheap—that’s equivalent to around $460 in today’s money.

At the time, the processor design engineers understood the relationship between speed and power consumption, but thermal design was not a concern. The IC remained barely warm even when running flat out at its maximum clock speed of 740kHz. Engineers were also unconcerned about the power rail’s 5% tolerance, and with the IC typically drawing only 30mA, voltage drop along tracks was negligible. This allowed the simple power source to be placed wherever it was most convenient.

Contrast this with the latest AI-positioned processors such as the Nvidia GB200 ‘Superchip’ with 200+ billion transistors and dissipating over 2.5kW peak with a sub-1V power rail that must deliver thousands of amps. Now, the ability to keep the die temperature within safe limits using some form of cooling has become a critical factor in key data center metrics—processing performance, server footprint, power usage effectiveness (PUE), cost effectiveness, and environmental impact. Additionally, power rails must be provided by high-efficiency DC/DC converters located directly at the processor to minimize voltage drops.

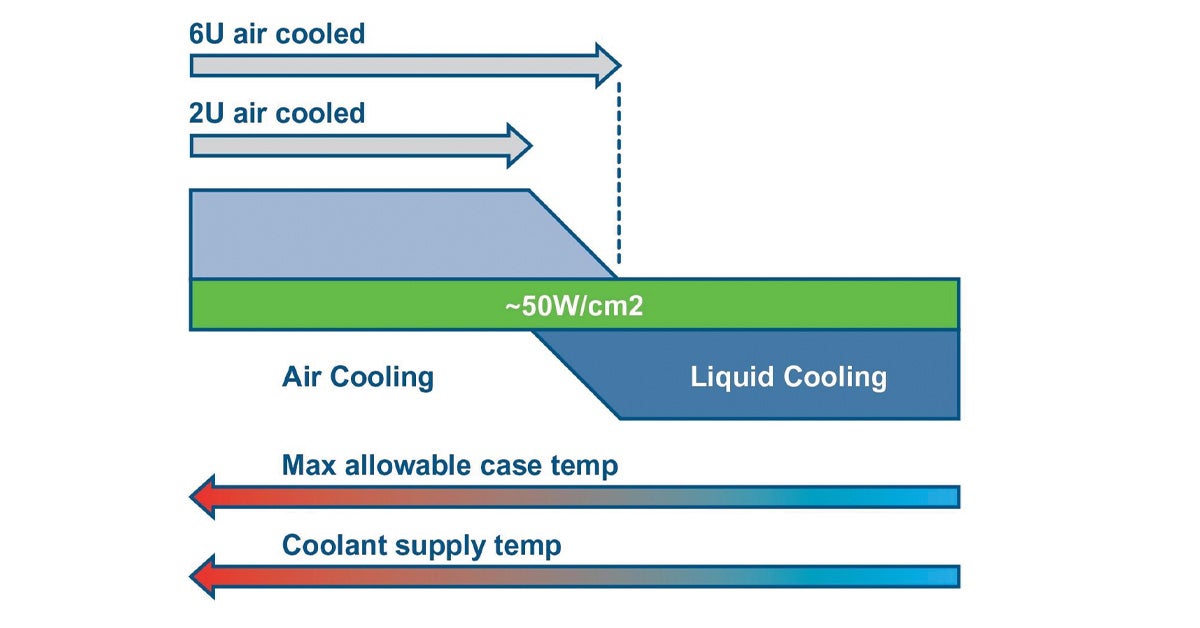

Up to a certain power level, heat generated by processors is typically managed using forced air. The die is thermally connected to a heat spreader plate on the top of the IC, which in turn is attached to a finned heatsink either directly or through simple heat pipes connected to an adjacent heatsink. Airflow from system fans then carries heat away, typically exhausting into the local environment. Building air conditioning then removes this heat from the broader environment, though at a considerable energy cost. The energy used for cooling is reflected in the PUE metric, which is the total energy used divided by IT energy, with a value of 1.5 being common. As many data centers now consume over 100 MW, potentially a third of this energy is used in cooling, which represents a massive cost and has a significant environmental impact.