Companies are recognizing AI as a competitive differentiator and use cases are proliferating. Powerful GPUs have captured imaginations and wallet share in the race to process extraordinary amounts of data faster. The technology is sensational, but what about the other hardware that makes AI computing possible?

If compute is the brain of the digital world, networking is the central nervous system — and it’s undergoing a significant transformation of its own. Welcome to the era of high-performance AI networking.

Why does AI require specialized networking?

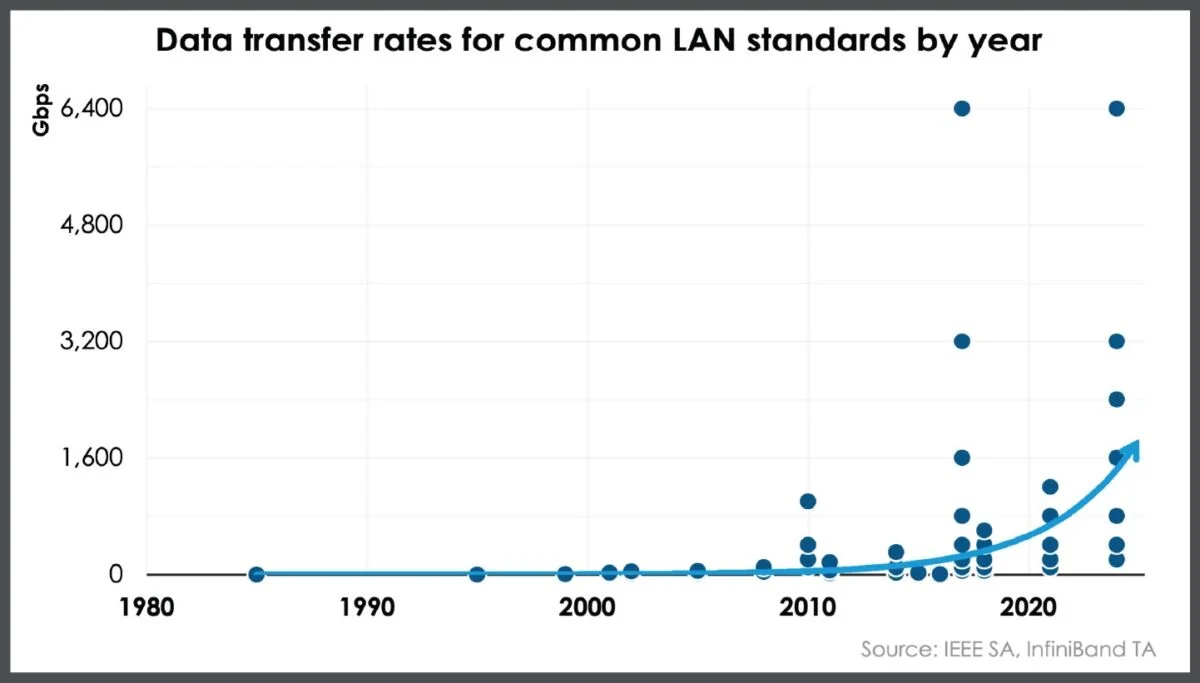

GPUs are very expensive, especially in the quantities required for large AI workloads. High performance, rack scale supercomputer platforms with 70-plus GPUs on board drive millions in capex. Enterprises and cloud providers investing heavily in advanced chipsets don’t want network bottlenecks to slow them down. What was once fine for business application data traveling at 25 gigabits per second (Gbps) is woefully inadequate at speeds approaching 1,600 Gbps (1.6 terabits per second), as is common during AI training. A high bandwidth, low latency AI network infrastructure is non-negotiable.

LAN data transfer rates have grown exponentially over the past decade, and some standards are expected to reach speeds of up to 25.6 Tbps in 2026, reinforcing the need for data center operators to ensure that they’re using the latest technologies to future-proof their sites.